The 3-Tier Trust Model: Securing High-Risk AI Tools

The 3-Tier Trust Model: Securing High-Risk AI Tools

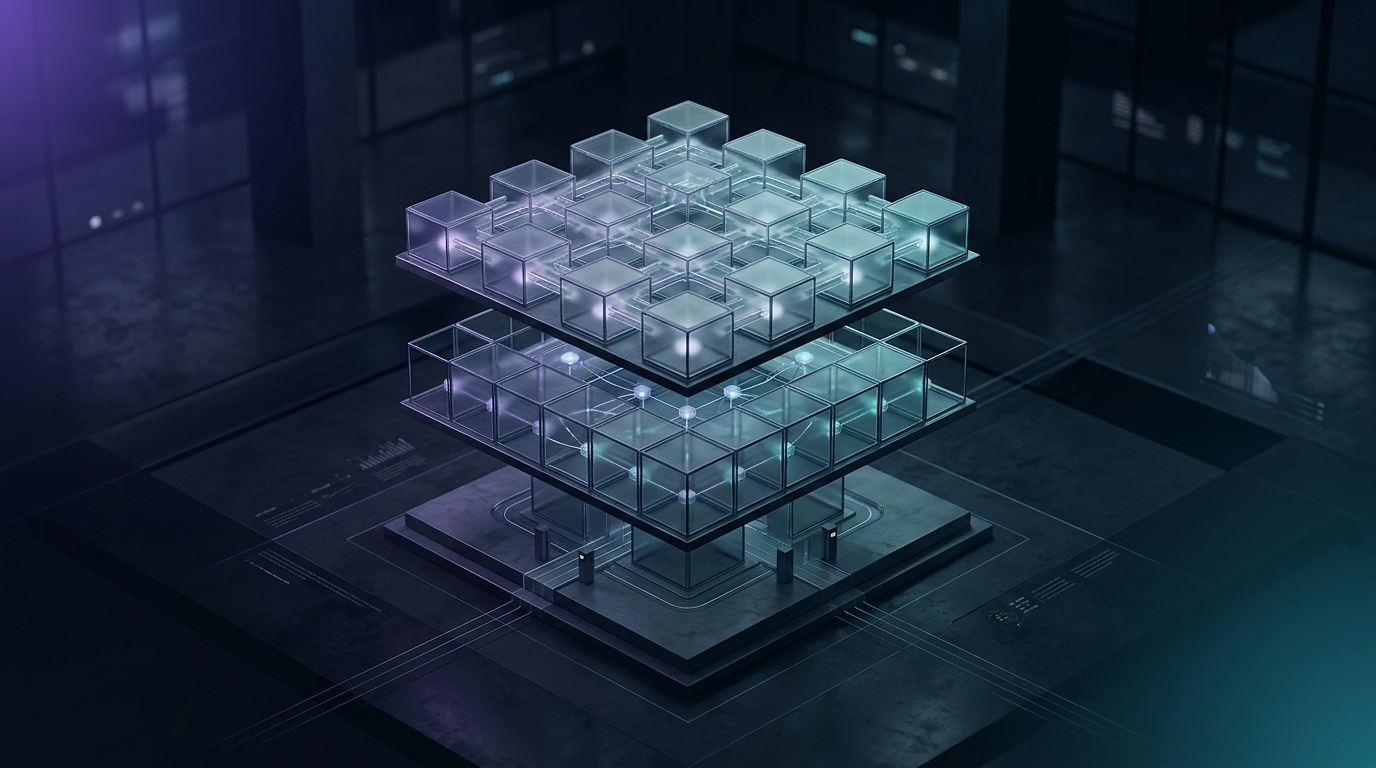

To secure high-risk AI tools, organizations must move from binary security (allow/block) to a tiered trust model that categorizes capabilities into built-in tools, external plugins, and user-defined skills. This approach enables the use of powerful features like shell execution (Bash) under a multi-layered defense shield involving real-time monitoring and command filtering.

Key Takeaways

- Shift from Binary to Tiered Security: Not all AI tools require the same level of oversight; classification enables flexibility without compromising safety.

- The Three Levels of Trust: Understanding the difference between Built-in Tools, Plugins, and Skills is essential for secure system architecture.

- Case Study - The Bash Tool: How 18 separate security modules protect systems from destructive commands.

- Enterprise Implementation: Practical steps for embedding a trust model within your organization's AI infrastructure.

Why Binary Security Fails in the Age of AI Agents

Traditionally, information security relied on firewalls and simple access permissions. In the realm of AI, this approach is insufficient. If we completely block a Large Language Model (LLM) from accessing external systems, we strip away its business value. If we grant unrestricted access, we expose the organization to prompt injection attacks and data breaches.

The core challenge is that AI agents operate dynamically. They generate code on the fly and decide which tools to use to solve a problem. Therefore, we must build a system where the level of oversight matches the specific risk profile of the tool in use.

The Three Levels of Trust: Claude’s Architectural Framework

Claude, developed by Anthropic, utilizes a sophisticated approach that categorizes tools into three distinct tiers:

Tier 1: Built-in Tools

These are tools integrated directly into the system. They enjoy the highest level of trust because they are authored, tested, and optimized by the model's own development teams. Examples include file analysis or basic search functions. These tools are always available, and their inherent risk is relatively low.

Tier 2: Plugins

Plugins are external tools connected to the model to extend its capabilities (e.g., connecting to a CRM or project management system). The trust level here is medium. The system allows users or administrators to disable them instantly. This tier requires a security layer that inspects the input and output flowing between the model and the plugin.

Tier 3: User-defined Skills

This is the highest-risk tier. These are tools or functions defined by the end-user. Since this code has not undergone rigorous security audits, the system treats it with maximum caution (Default-deny). Any action in this tier requires explicit approval or execution within a completely isolated sandbox environment.

Case Study: Protecting the Bash Tool with 18 Security Modules

One of the most powerful yet dangerous tools an AI agent can possess is the Bash tool—the ability to run commands directly in the terminal. A single incorrect command (like rm -rf /) can wipe an entire server.

Rather than relying solely on sandboxing, Claude employs an architecture featuring 18 separate security modules:

- Forbidden Command Filtering: A blacklist of dangerous commands.

- Suspicious Pattern Detection: Using Regular Expressions (Regex) to identify bypass attempts.

- Resource Limiting: Preventing the model from running processes that consume all CPU or memory.

- Human Approval for Destructive Actions: The system identifies commands that could modify data and mandates a manual confirmation.

This granular approach demonstrates that high-utility features can be enabled safely, provided the defense mechanism is broken down into small, specific modules.

LLM Implementation Guide

Implementing a Tiered Trust Model in Your Organization

If you are building internal AI agents, consider adopting these principles:

- Tool Mapping: Inventory all tools your AI can access and rank them by risk level (Low, Medium, High).

- Environment Isolation: Ensure that Tier 3 tools always run within isolated containers without access to the core corporate network.

- Observability: Maintain detailed logs of every external tool call. Which model initiated it? What was the input? What was the result?

Conclusion

The future of enterprise AI depends on our ability to give models "hands and feet"—meaning, access to tools. The 3-tier trust model provides the necessary roadmap to do this safely. By separating tool types and implementing specific protections like those seen in the Bash tool, companies can maximize technology's potential without risking their digital assets.

Ready to deploy secure AI agents in your organization? Contact the experts at Aniccai today.

FAQ

Q: What is a tiered trust model in AI? A: It is a method of categorizing AI tools based on their risk level, where each level has different security and approval mechanisms instead of applying a one-size-fits-all policy.

Q: Why is the Bash tool considered so high-risk? A: Because it allows the AI model to interact directly with the operating system, which could lead to file deletion, server configuration changes, or data leaks if clear boundaries are not set.

Q: Can we trust the AI to secure itself? A: No. Security must be implemented at the architectural level outside the model (Guardrails), as demonstrated by Claude's 18 security modules.

Q: How can a small organization start implementing this? A: Start by defining a "whitelist" of allowed commands and tools, and ensure that any significant action requires human-in-the-loop confirmation.

Related Articles

Why Vector Search is No Longer Enough for AI

Discover why tech giants are moving beyond simple vector search to complex knowledge architectures and how it impacts your AI implementation strategy.

AI Survival Guide: How to Become an Irreplaceable Asset

Is your job safe? Discover how to become an irreplaceable asset in the AI era by mastering judgment, agentic workflows, and unique human value.

The Homework Illusion: Lessons from 1970s Calculators

Traditional homework is dead. Discover how the 1970s calculator revolution provides a roadmap for integrating AI into education and business today.